Overview

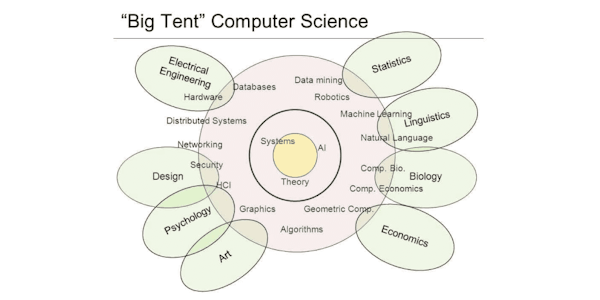

Probabilistic graphical models (PGMs) are a rich framework for encoding probability distributions over complex domains: joint (multivariate) distributions over large numbers of random variables that interact with each other. These representations sit at the intersection of statistics and computer science, relying on concepts from probability theory, graph algorithms, machine learning, and more. They are the basis for the state-of-the-art methods in a wide variety of applications, such as medical diagnosis, image understanding, speech recognition, natural language processing, and many, many more. They are also a foundational tool in formulating many machine learning problems.

This course is the first in a sequence of three. It describes the two basic PGM representations: Bayesian Networks, which rely on a directed graph; and Markov networks, which use an undirected graph. The course discusses both the theoretical properties of these representations as well as their use in practice. The (highly recommended) honors track contains several hands-on assignments on how to represent some real-world problems. The course also presents some important extensions beyond the basic PGM representation, which allow more complex models to be encoded compactly.

Syllabus

- Introduction and Overview

- This module provides an overall introduction to probabilistic graphical models, and defines a few of the key concepts that will be used later in the course.

- Bayesian Network (Directed Models)

- In this module, we define the Bayesian network representation and its semantics. We also analyze the relationship between the graph structure and the independence properties of a distribution represented over that graph. Finally, we give some practical tips on how to model a real-world situation as a Bayesian network.

- Template Models for Bayesian Networks

- In many cases, we need to model distributions that have a recurring structure. In this module, we describe representations for two such situations. One is temporal scenarios, where we want to model a probabilistic structure that holds constant over time; here, we use Hidden Markov Models, or, more generally, Dynamic Bayesian Networks. The other is aimed at scenarios that involve multiple similar entities, each of whose properties is governed by a similar model; here, we use Plate Models.

- Structured CPDs for Bayesian Networks

- A table-based representation of a CPD in a Bayesian network has a size that grows exponentially in the number of parents. There are a variety of other form of CPD that exploit some type of structure in the dependency model to allow for a much more compact representation. Here we describe a number of the ones most commonly used in practice.

- Markov Networks (Undirected Models)

- In this module, we describe Markov networks (also called Markov random fields): probabilistic graphical models based on an undirected graph representation. We discuss the representation of these models and their semantics. We also analyze the independence properties of distributions encoded by these graphs, and their relationship to the graph structure. We compare these independencies to those encoded by a Bayesian network, giving us some insight on which type of model is more suitable for which scenarios.

- Decision Making

- In this module, we discuss the task of decision making under uncertainty. We describe the framework of decision theory, including some aspects of utility functions. We then talk about how decision making scenarios can be encoded as a graphical model called an Influence Diagram, and how such models provide insight both into decision making and the value of information gathering.

- Knowledge Engineering & Summary

- This module provides an overview of graphical model representations and some of the real-world considerations when modeling a scenario as a graphical model. It also includes the course final exam.

Taught by

Daphne Koller

Tags

Reviews

4.1 rating, based on 18 Class Central reviews

4.6 rating at Coursera based on 1433 ratings

Showing Class Central Sort

-

The course is interesting though hard, but lack the great pedagogy of Andrew Ng in his "Machine Learning Course". - The syllabus logic is weird. - It is a lot about theory and lack connection with concrete examples with some data and code - When the…

-

Excellent lecturer who explains clearly pretty complex notions through carefully selected examples. Presented are many links to real-world applications. Unlike other courses on coursera, this one is not 'watered down'.

The course claims that 'the average Stanford student needs between 15 and 20 hours of work per week to complete the course'. This might be true for some students. In my personal experience this statement was exaggerated, but nevertheless I needed about 7h/week (60% of which was spend on the programming assignments for the advanced track). For a working professional this might be too much. -

I found the programming assignments to be quite tough, or put differently, more difficult than any other Coursera course. If not for the generous help of other students in the forum, (a view echoed by many other students), it would be impossible to get through the assignments. In my case, I had to sometimes grind through the assignment to finish it in time, sometimes missing the intuition behind a method that was used in the assignment.

-

This class was interesting and it was very difficult. The problem was, it was difficult for all the wrong reasons. I could spend days trying to get the grading system to accept my programming assignment all to find out that I was missing an assumption that wasn't stated anywhere in the assignment write-up or the lecture videos. Sometimes you'd get lucky and somebody in the forums would figure out what the gotcha was early on.

-

This is not an easy course, so beware. The instruction is solid but you still need to reason through a lot on your own, and especially if you choose to complete the Honors programming section (which I highly recommend to prove to yourself that you r…

-

Immersing and challenging course. Advises lots of ways for further study in the field. Definitely, choose an advanced track with programming assignments. 20 hours per week - it's true, but no quite correct: not every week material is equally complex, I'd say 20 hours is a peak load. Just try to go at least one week ahead of the deadline schedule and you'll be fine. The choice of a Matlab/Octave may seem a bit archaic nowadays. I wish this course was accompanied with assignments in Python or R or Scala.

-

A very disappointing experience based on following:

1. Audio quality is extremely poor and made worse by the very high (almost painful) fluctuations in the teacher's pitch;

2. Many portions of the audio were intelligible;

3. This was not designed to be a MOOC but slapped together from live lectures. -

she's gotta nice voice very easy to listen to

good material too little over dressed

certainly a topic that one doesn't consider while computing,

but is very necessary in a number of applications within an

application -

This course has been retired since old platform discontinued June 2016 . There seems little hope of it appearing on new platform which is a shame . The course paralleled a real Stanford graduate course

-

She conveys hard stuff in a very lucid manner. One can learn so much about the application of probabilistic models.I love the class!

-

-

-