Overview

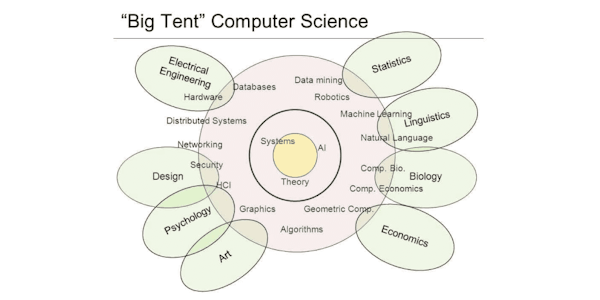

Probabilistic graphical models (PGMs) are a rich framework for encoding probability distributions over complex domains: joint (multivariate) distributions over large numbers of random variables that interact with each other. These representations sit at the intersection of statistics and computer science, relying on concepts from probability theory, graph algorithms, machine learning, and more. They are the basis for the state-of-the-art methods in a wide variety of applications, such as medical diagnosis, image understanding, speech recognition, natural language processing, and many, many more. They are also a foundational tool in formulating many machine learning problems.

This course is the third in a sequence of three. Following the first course, which focused on representation, and the second, which focused on inference, this course addresses the question of learning: how a PGM can be learned from a data set of examples. The course discusses the key problems of parameter estimation in both directed and undirected models, as well as the structure learning task for directed models. The (highly recommended) honors track contains two hands-on programming assignments, in which key routines of two commonly used learning algorithms are implemented and applied to a real-world problem.

Syllabus

- Learning: Overview

- This module presents some of the learning tasks for probabilistic graphical models that we will tackle in this course.

- Review of Machine Learning Concepts from Prof. Andrew Ng's Machine Learning Class (Optional)

- This module contains some basic concepts from the general framework of machine learning, taken from Professor Andrew Ng's Stanford class offered on Coursera. Many of these concepts are highly relevant to the problems we'll tackle in this course.

- Parameter Estimation in Bayesian Networks

- This module discusses the simples and most basic of the learning problems in probabilistic graphical models: that of parameter estimation in a Bayesian network. We discuss maximum likelihood estimation, and the issues with it. We then discuss Bayesian estimation and how it can ameliorate these problems.

- Learning Undirected Models

- In this module, we discuss the parameter estimation problem for Markov networks - undirected graphical models. This task is considerably more complex, both conceptually and computationally, than parameter estimation for Bayesian networks, due to the issues presented by the global partition function.

- Learning BN Structure

- This module discusses the problem of learning the structure of Bayesian networks. We first discuss how this problem can be formulated as an optimization problem over a space of graph structures, and what are good ways to score different structures so as to trade off fit to data and model complexity. We then talk about how the optimization problem can be solved: exactly in a few cases, approximately in most others.

- Learning BNs with Incomplete Data

- In this module, we discuss the problem of learning models in cases where some of the variables in some of the data cases are not fully observed. We discuss why this situation is considerably more complex than the fully observable case. We then present the Expectation Maximization (EM) algorithm, which is used in a wide variety of problems.

- Learning Summary and Final

- This module summarizes some of the issues that arise when learning probabilistic graphical models from data. It also contains the course final.

- PGM Wrapup

- This module contains an overview of PGM methods as a whole, discussing some of the real-world tradeoffs when using this framework in practice. It refers to topics from all three of the PGM courses.

Taught by

Daphne Koller