Overview

Syllabus

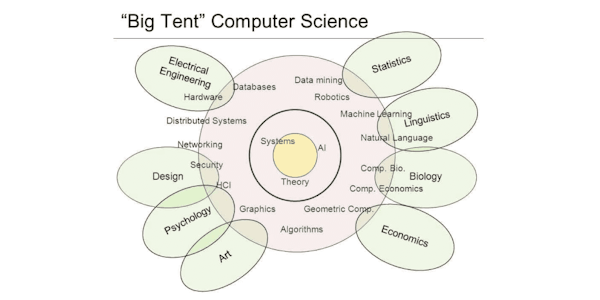

Intro

Outline

The ML model development process

Model Evaluation

Motivation

Common approach: importance weighting

Motivating example

Mandoline: Slice-based reweighting framework

The theory behind using slices

More formally...

Density Ratio Estimation

Experiments: tasks

Experiments: compare to reweighting on x

Summary

Taking a step back - how do we get slices? What are sli

Measuring model performance

Hidden Stratification: Approach

ML model development process, revisited

Another angle - how else can we evaluate?

"Closing the loop" - how do we update?

Taught by

Stanford MedAI